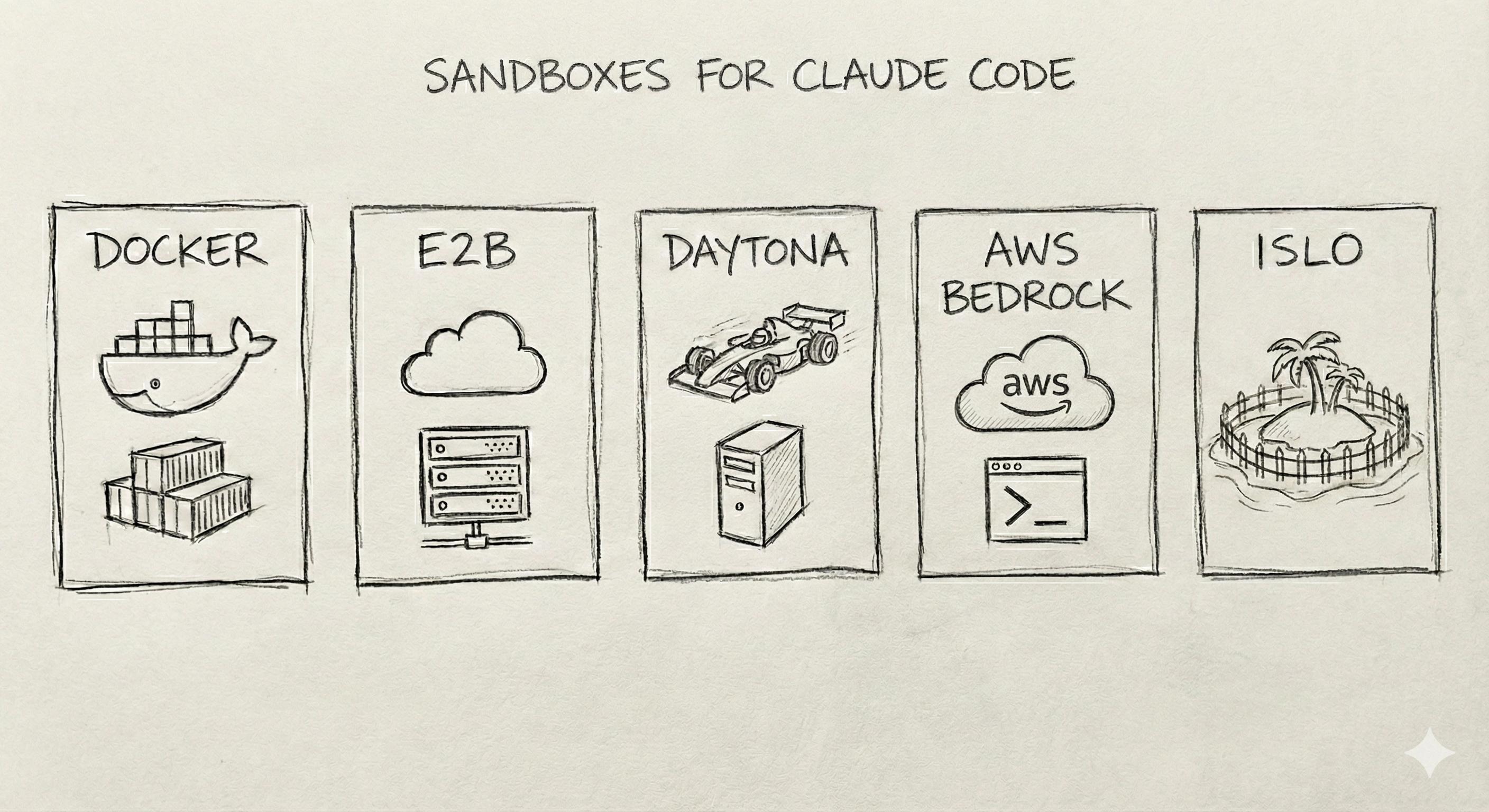

Claude Code can run commands, edit files, and execute builds on your machine. Letting it do that without a sandbox means giving an AI agent the same access as you - which is great for flow, but bad for safety. Sandboxes give you isolation: the agent runs in a cage, your SSH keys and host filesystem stay out of reach. But it’s not only that - sandboxes can give you access from your phone, teleportation features between your local environment and the remote one, and much more. It is the infrastructure - computer, actually, that runs your agents.

I’ve been working on agent security and sandbox stuff for a while. These are the five options I’d actually consider, and why you might pick one over another.

1. Docker Sandbox

Docker’s native sandbox mode - Claude Code supports it directly via docker sandbox run. Easiest path if you already use Docker.

Why it’s solid:

- Built-in integration with no extra setup, no third-party CLI. It just works locally.

- Better isolation than no isolation.

Why it’s not enough:

- It’s only isolation. No policy layer, no approvals, no audit trail unless you build it yourself.

- Configuration is on you. Dangerous flags (

-v /:/host,--mount-docker-socket) can undo isolation entirely - and something can change how you invoke the sandbox without you noticing.

Pick this if: You want the path of least resistance and you’re willing to own the config. Or you’re okay with “good enough” and you’ll add guardrails later (spoiler: most people don’t).

2. E2B

Open-source cloud infra for AI agents. Firecracker microVMs - hardware-level isolation, not just containers.

Why it’s solid:

- MicroVM isolation. Stronger than Docker; you’re not sharing a kernel with the agent.

- Good & fast runtime environment for code that was written by LLMs.

- LLM-agnostic. Claude, GPT-4, Llama, whatever. Swap models without swapping sandboxes.

- Install packages, use the filesystem, persist sessions (e.g. up to 24 hours). Feels like a real machine.

- Self-hosting is possible (still experimental, but the option exists).

Why it might not fit:

- No one-click Claude Code integration. You wire it in - API or SDK. If you want “install and go,” this isn’t it.

- Sandbox-only. No built-in HITL or approval workflows. You get isolation but you don’t get more features on top.

Pick this if: You want strong isolation and don’t mind integrating. Good for teams that already have an agent runtime and need a better cage.

3. Daytona

Agent-native infra - fast agent-native infra. They claim to have sub-90ms sandbox spin-up in many cases.

Why it’s solid:

- Good & fast runtime environment for code that was written by LLMs.

- Really fast. Cold start doesn’t feel like cold start.

- Native Docker compatibility. No proprietary image format - use what you already have.

- Stateful sandboxes - pick up where you left off.

Why it might not fit:

- Some features still evolving. Not a dealbreaker, but set expectations.

- No direct “Claude Code plugin.” You integrate. Same story as E2B - you’re gluing pieces together.

Pick this if: Cold start latency actually bothers you and you want Docker-friendly, stateful sandboxes. Speed is the sell.

4. AWS Bedrock AgentCore (Code Interpreter)

Not a standalone sandbox - it’s part of AWS Bedrock’s agent platform. Runtime, gateway, memory, identity, observability, policy. The code interpreter is the sandbox (Python, JS, TS); fixed resources (e.g. 2 vCPU, 8GB RAM, configurable network).

Why it’s solid:

- Full platform. You’re not just buying execution; you’re buying the whole stack. Gateway, memory, identity, observability - it’s all there.

- Managed and scalable. Fits enterprises already on AWS. Procurement is easier when it’s already on the bill.

- Compliance checkbox. SOC2, HIPAA, etc. If you need “we run on AWS and here’s the audit,” AgentCore fits.

Why it might not fit:

- AWS-only. No on-prem. If you’re not in the cloud or you’re multi-cloud, this isn’t the answer.

- Fixed resource shapes. Not “bring your own” arbitrary containers. You get what they give you.

- No “Claude Code on your laptop” flow. This is for server-side agents. If you’re running Claude Code locally and want a sandbox, AgentCore isn’t in that lane.

Pick this if: You’re building or deploying agents in the cloud on AWS and want a managed, compliant execution environment. Nobody gets fired for choosing AWS - and sometimes that’s the right call.

5. Islo

All-in-one runtime for AI agents (Claude Code, Cursor, REST API, Python). Guardrails run silently in the background so you can let agents run for hours without babysitting.

Why it’s solid:

- Agents run for hours - no approval prompts in the loop. Defense in silent mode, full autonomy inside the cage. Your host stays out of scope.

- Persistent memory (conversations, decisions, learnings - per project or global), persistent sessions, smart environment detection (

islo initfrom repo, no Dockerfiles to write). - Agent discovers new MCP tools and integrations; skill library updates automatically. You’re not manually wiring every new capability.

- AWS, GitHub, Kubernetes. Agent sets up its own env that mirrors yours (packages, tools, configs auto-detected).

- When something actually matters, you get notified - push (iOS, Android, Slack, webhooks), plain language (“Agent wants to delete the staging database” not “rm -rf …”), risk levels and blast radius. Approve or deny in seconds.

Why it might not fit:

- Newer ecosystem. Some features still evolving.

- Another service to run and trust - requires signup and CLI install.

Pick this if: You want to run agents unattended (overnight, long sessions) with defense in the background and smart approvals when it matters - without constant permission dialogs.

How to choose

The “best” sandbox depends on what you care about most - setup time, isolation strength, latency, auditability, cloud integration. For Claude Code specifically, Docker Sandbox is the default because it’s there; if you want stronger isolation and clearer boundaries, look at E2B or Islo. If you’re building agents in the cloud on AWS, AgentCore is the platform play.

No matter which you pick: verify what’s actually isolated. A sandbox is only as good as its configuration and how it’s invoked.

Related Reading

- Understanding Sandbox Security - Deep dive into why isolation beats permission dialogs

- How Secrets Can Be Stolen - See a real prompt injection attack in action

- Islo vs Claude Code for Web - When to use browser-based tools vs a full sandbox runtime